Congress will be very disappointed in you.

The New York Times Opinion section recently got in touch and asked me if I felt AI companies could be good. I told them I didn’t believe they could—not because I think AI is inherently evil, but because I don’t believe that a single company at a very large scale can have one, consistent ethical vision:

Over three decades of watching the tech industry and watching big companies grow from tiny teams to global powers, I’ve observed the same pattern: Ethics don’t scale up. Tech companies like to start with a mission. Google wanted to connect the world’s information; Microsoft wanted to put a computer on every desktop; Twitter wanted to give all people a platform to publish their thoughts. These are good ideas — the stuff of TED Talks. But users show up with their own beliefs and ideas, by the millions. As a tech founder, you end up putting enormous work into making users behave (and stopping them from breaking the law). Lawsuits pour in, saying you did wrong, some because you’re a convenient target.

As companies grow, “good” is not a single universal thing that everyone will agree on. A veteran might feel strongly that it’s good to work for the Air Force; a peace activist might feel the opposite. That’s old news for anyone in business. What’s wild to me, though, is that AI companies seem to be destroying the very business environment they need to grow:

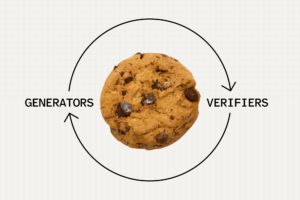

But regulation is absolutely in the interests of both America and the big A.I. companies themselves. Let me add two more terms people should know: “Google zero” and “model collapse.” Google zero (coined by Nilay Patel, the editor in chief of The Verge) is when Google stops sending traffic to websites and just provides an A.I. answer instead. When that happens, websites get less traffic, sell fewer ads and make less money. As a result, they may not be able to produce as much content. Model collapse is related: It’s when the A.I. models run out of knowledge to digest. What then? Do they excrete their own prose to redigest? Do they just give up?

I think it’s obvious that in a mature, civil society you’re going to need to regulate machines that can simulate humans to the point of believability. That’s a primary theme of sci-fi for a full century now, back to R.U.R., the early twentieth century play that gave us the term “robot.” We’ve been thinking about “how will we deal with intelligent machines” for decades. But what we haven’t been thinking about is, “when intelligence simulators can make content that we consume, what will generate new thoughts and discourse?” That’s the puzzle proposed in the “Google zero” conversation. From that linked article from The Verge:

Earlier this year, a small site called HouseFresh, which is dedicated to reviewing air purifiers, published a blog post that really crystallized what was happening with Google and these smaller sites. HouseFresh managing editor Gisele Navarro titled the post “How Google is killing independent sites like ours,” and she had receipts. The post shared a whole lot of clear data showing what specifically had happened to HouseFresh’s search traffic — and how big players ruthlessly gaming SEO were benefiting at their expense.

Air purifiers are only the beginning. As Anil Dash recently wrote:

The threat to the open web is far more profound than just some platforms that are under siege. The most egregious harm is the way that the generosity and grace of the people who keep the web open is being abused and exploited. Those people who maintain open source software? They’re hardly getting rich — that’s thankless, costly work, which they often choose instead of cashing in at some startup. Similarly, volunteering for Wikipedia is hardly profitable. Defining super-technical open standards takes time and patience, sometimes over a period of years, and there’s no fortune or fame in it.

I don’t think AI companies can fix this, even though it would be in their best interest to do so. But I think it’s a real challenge. This new wave of change is taxing the ecosystem of online thinking and doing it in ways we could never have imagined. It makes me wonder: Not only could we regulate AI, but could there be a national blogging fund? Just imagining how it might work makes me very anxious. But maybe it’s time to try!