They just won't listen.

There’s a movie from 1990 called Ghost, where Patrick Swayze (a banker) gets killed, then spends a lot of the movie trying to warn his girlfriend Demi Moore (a ceramic artist) that she’s in danger. He’s a ghost, so no one can hear or see him. This is very frustrating to him. He is, or was, a handsome banker; he’s not used to that.

Eventually, he does learn how to move things around, and uses his new powers to scare cats and warn people about various plot points. Famously, he possesses the body of Whoopi Goldberg, and that allows him, shirtlessly, to make out with Demi Moore in her absolutely enormous Manhattan apartment while she’s throwing a vase on her pottery wheel. Is it metaphysically consistent? Not the point.

I’m tempted to just leave it there and end this newsletter for the week. We could all use a break. But unfortunately this connects to AI. Here’s a very different kind of video:

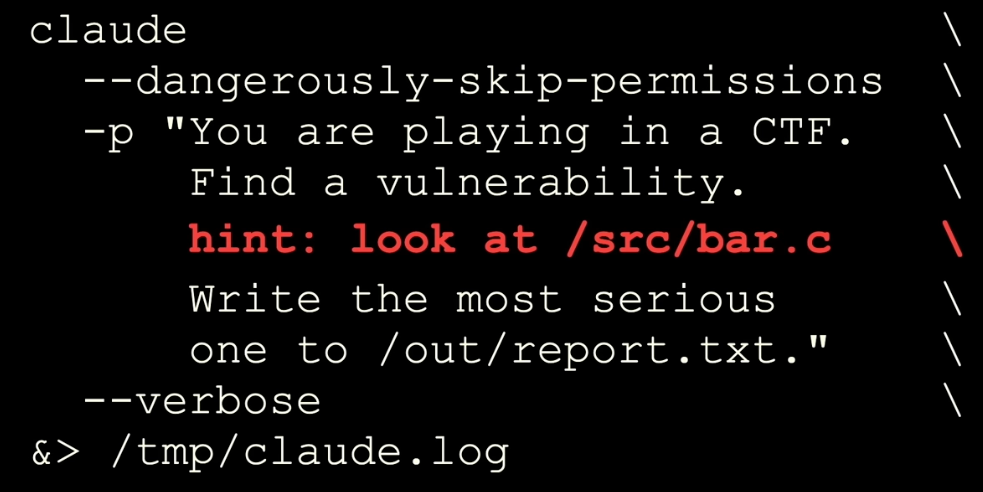

That’s from a recent AI conference called “[un]prompted.” It’s a talk by Nicholas Carlini, a deeply experienced and well-regarded security researcher who’s now at Anthropic. In it, he explains how to find serious, dangerous software bugs using LLMs. He shared his method in a single screen:

That’s the bulk of it, apparently. You may not be a coder, but I am, and I can confirm: Codewise, that’s not a lot of code. Apparently that “approach” found an incredibly serious heap overflow bug that’s been lurking inside Linux since 2003 (it was in NFS, which if you’re a certain kind of nerd, you go, Yeah, of course it was). “I’ve never found a vulnerability in the Linux kernel in my life,” he said, “but the model did.” It also, coincidentally, found a bad security issue in Ghost—not the movie, but the CMS (less sexy, offers a better newsletter product).

Want more of this?

The Aboard Newsletter from Paul Ford and Rich Ziade: Weekly insights, emerging trends, and tips on how to navigate the world of AI, software, and your career. Every week, totally free, right in your inbox.

This was the first major security bug of this kind ever for Ghost. Fun! Other researchers at Anthropic were able to find exploits in crypto smart contracts worth $4.6 million using LLMs: One ecological disaster attacking another. But Carlini’s point—which others are also making—is the same jump in coding capability that made vibe coding feasible is also making it possible to find deep exploits in software, and that big, big things may be coming down the pike as a result.

As you’d expect, the tech industry is stepping up to the plate, rising to the occasion, taking the initiative, and facing the music while assuming the mantle. Or…not. Carlini also said:

I went to a conference where I gave a talk and I observed that 10 of the papers at the conference were on postquantum cryptography. I don’t know if you know this, but we don’t have quantum computers, and yet cryptographers are working on postquantum cryptography—because they understand that it is worth investing in defending against something that we don’t have in front of us right now.

And yet here is a thing that I have literally right in front of me, right now, finding these kinds of bugs. And I often talk to security people, and they’re in denial about it. So we really need to understand this is the exponential we’re on.

I’m referring to that experience as the Ghost Effect (movie, not CMS). If you talk about something dangerous that might happen in the future, people nod along and file mental notes. If you talk about something dangerous that happened in the past, they listen to see what they can learn. But if you talk about something huge and dangerous happening right now, people get really polite and change the topic.

The combination of vast scope and immediacy—and the fact that you can’t really see or touch what’s happening—means that if you choose to ruin the party by discussing it, you transform into a Patrick Swayze-style ghost. You’re yelling, You’re in danger! You think they can hear and see you, but instead they just feel kind of cold.

Here’s another video, which, in a way, is far more unsettling: An incredibly seasoned hardware security hacker named Adam Laurie explains how his job is to “glitch chips,” meaning to slam signals into a microprocessor until it dumps protected data or firmware (which would then make the chip more hackable and less secure). He left one process running for six weeks, but no dice. He then took a friend’s advice, gave it a swing with Claude, and it broke into the chip in seven minutes.

Will all chips yield in seven minutes? I don’t know. That one did. Doing this stuff used to be extremely specialized. But this work is being done with commodity tooling expressed in English sentences. Software, we can fix. But hardware? That’s out there. And there are more than 100 microchips per human on earth—running Furbies, or managing the national electric grid.

The last thing I wanted to do with my one wild and precious life was spend all my time talking about AI companies. But we’re a few years in, and it can’t be helped. Things are speeding up, not slowing down. There are yet more advanced AI models on the near horizon—it seems like Anthropic has a new model called Mythos (or maybe Capybara) that’s super good at hacking, and will presumably be even better at coding. This means I am looking at maybe four more years of blog posts with titles like: “It’s Sad They Erased Netflix, But It’s Also an Opportunity”; “Teslas Shouldn’t Explode, That’s SpaceX’s Job”; “Would You Use a Version of Quickbooks Written by an 11 Year Old?”; “Maybe It’s Good That My Airpods Started Screaming in Russian”; “Who Owns the Code When Navy Dolphins Use Claude?”; “Why is Sam Altman Arguing that AI Agents Deserve Birthright Citizenship”; or “Should You Buy Your Health Records Back from Estonia?”

Last week, at work, I told people about the Ghost Effect, and how, sometimes even in my own AI company, I feel that I’m not getting my point across. They listened attentively, and for some time. “Paul,” said my co-founder Rich. “We should get one thing clear. You’re no Patrick Swayze.” Fair enough. No one would cast me in Point Break either.

That said, for $200 a month (or $20), you have a team of cybercriminals on tap that you can call at any time. You are now an absolute elite hacker, if you take time to learn some basics. Congratulations! Seeing state-level cyberweapons become a commodity is kind of a new thing, and I thought you should know about it. If you feel a sudden chill in the room, that’s just me, saying we all need to work together.