The More Things Change

In tech, the money is in innovation, but the careers are in predictability.

It says everything is going to work out great.

On the podcast this week, I spoke with my co-founder, Richard, about how AI is a little…boring lately.

As the technology stabilizes and matures, usage patterns are starting to appear. You can trust the chat in some things—summarizing documents, or generating Ghibli-esque images without ethical constraints, or helping you along with code. You need to validate findings in others, like when it makes up citations for research reports. But I don’t know how many revolutionary product releases await us. Probably one or two! There’s billions of dollars of gas in the tank, but it feels like we’re starting to see the real limits of this exciting, weird new technology.

The way you can tell that this is happening is the AI companies are actually releasing…products. Not just more stuff jammed into chat, or research prototypes, or random stuff trumpeted in a tweet. Claude has Claude Code (which is good). OpenAI, which promises to improve its product naming, recently offered up improved image quality—and is now rolling things out in a fairly normal way, with some fanfare and marketing. There are more standards and protocols, like MCP, there’s more standardization, even de-facto, such as the OpenAI API becoming pretty much the norm. And there’s better documentation than there used to be.

Want more of this?

The Aboard Newsletter from Paul Ford and Rich Ziade: Weekly insights, emerging trends, and tips on how to navigate the world of AI, software, and your career. Every week, totally free, right in your inbox.

This is happening because this technology is becoming better understood, more standardized—and more predictable. “Boring” is high praise in tech, because predictability is so valuable.

It’s starting to show up in daily work. This week we had a product demo here at Aboard, and it went very well. We asked our head of engineering how reliable the output was—if you put in the same query, will you get the same result? And the answer was, basically: Yes. Everything still has caveats, but our small engineering team, over about half a year, has learned how to write systems that prompt an LLM, get results back as data, and confirm that the data is what was expected. Were we there in 2006? Yes, but it couldn’t draw pictures of cats.

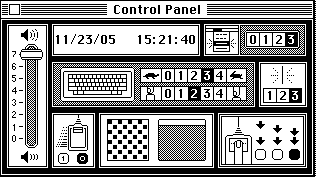

Is this bad? Is this good? Well, Apple is one of the most valuable companies in history, right? And we’re always waiting for the next big thing from them—the next iPhone. But I want to show you how the MacOS settings looked in version 1.0, in the early 1980s:

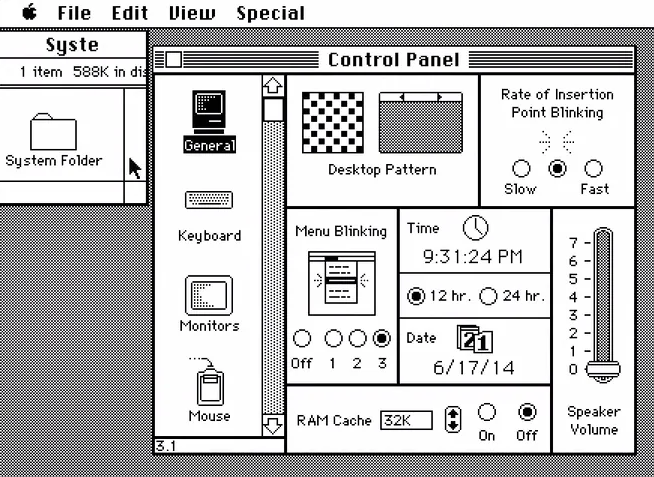

And here’s what they looked like by 1987:

And here’s what the control panel looks like today:

There’s a pretty obvious difference—but also a pretty obvious pattern. That’s because users count on control panels and settings to be roughly the same, version after version. You can add search, and work at higher resolutions, but the basic idea just doesn’t change that much.

Another way to put it: The money is in innovation, but the careers are in predictability.

It’s probably too early to predict that AI is entering its predictable zone. But when you think about the drama over DeepSeek—the excitement wasn’t about some magical new feature or amazing capability. The excitement was over “faster” and “cheaper.” Classic tech industry stuff.

My wild guess is that if someone came back to this newsletter 20 years from now, they’ll laugh at how naive I was about lots of things, but they’ll recognize the patterns of AI just like we can recognize the control-panel widgets from 40 years ago. Chat will still be there. It’ll still generate images and videos. And it’ll probably still get things wrong. And people will still be talking about AGI, while the systems keep getting faster and better.

At least, that’s what I hope happens. I’m a technologist who likes to build, and every time I sat down to make something with this tech over the last few years, it would seem like I was working with a totally different tool. It’s been interesting, but in the long run, it’s no fun to build on sand.

Over the last five or six months, things have been coming into focus. When I sit down to write in Claude Code, I know how it’s going to behave. When I ask ChatGPT to make an image, I know how it will probably look, and where it will fall down. It feels like I, as a user, have some control again. I hope everyone gets to feel that way more and more.