Tasty? Poisonous? Hard to tell.

Just yesterday, according to an article in Bloomberg News by Shirin Ghaffary, Anthropic released ten new tools for the finance industry, to help bankers “draft pitch decks for client meetings, review financial statements, and escalate cases for compliance review.” As reported:

“Finance is a great blueprint for the rest of knowledge work,” said Nicholas Lin, Anthropic’s head of product for financial services. Lin added that financial uses of AI are “just a few months behind” the technology’s coding applications, “which we’ve seen massive acceleration in.”

Certain financial services stocks—Morningstar Inc., FactSet Research Systems—fell as a result. So what does this mean? Are AI companies going to come for every industry? Is Claude going to do what it did to code to everything else?

To answer this, I want to zoom way, way, way out.

Part 1: Pitching a Prospect

I built an AI system that researches companies and helps me define and plan long-term product engagements. It’s rough but useful; we call it “Atlas.” I feed it company names from our inbound leads and it models out that company’s business. Then I tell it about software they might need us to build, and it evaluates how that software could drive growth. When asked, it makes a PowerPoint pitch deck.

I recently used one of those decks to pitch a lead (I told the person on the other side how the deck was made and that I was experimenting on them). At their request, I had explored a path where our company would create a replacement for software they currently license. Our system calculated that they could save around $400,000 a year.

They saw the analysis and a bunch of other bits of data in the AI-created deck and said, “These numbers on our costs are…accurate.” They paused before they asked: “What did I actually tell you?”

I thought for a minute. “You told me your annual revenue,” I explained. “Then I had our system research your company and build me a business model, which I used to back out your per-transaction costs. Then I told it about the products we intended to build and asked it to figure out how much we could save. Then it made this deck with schedule and pricing.” I paused. “Pretty cool, right?”

We both sort of sat there on the video call for a moment, processing, and then I went back to the pitch. It was a weird moment. But it was also validating because it meant that this approach worked. (Although take that with a grain of salt, because we haven’t landed the business yet.)

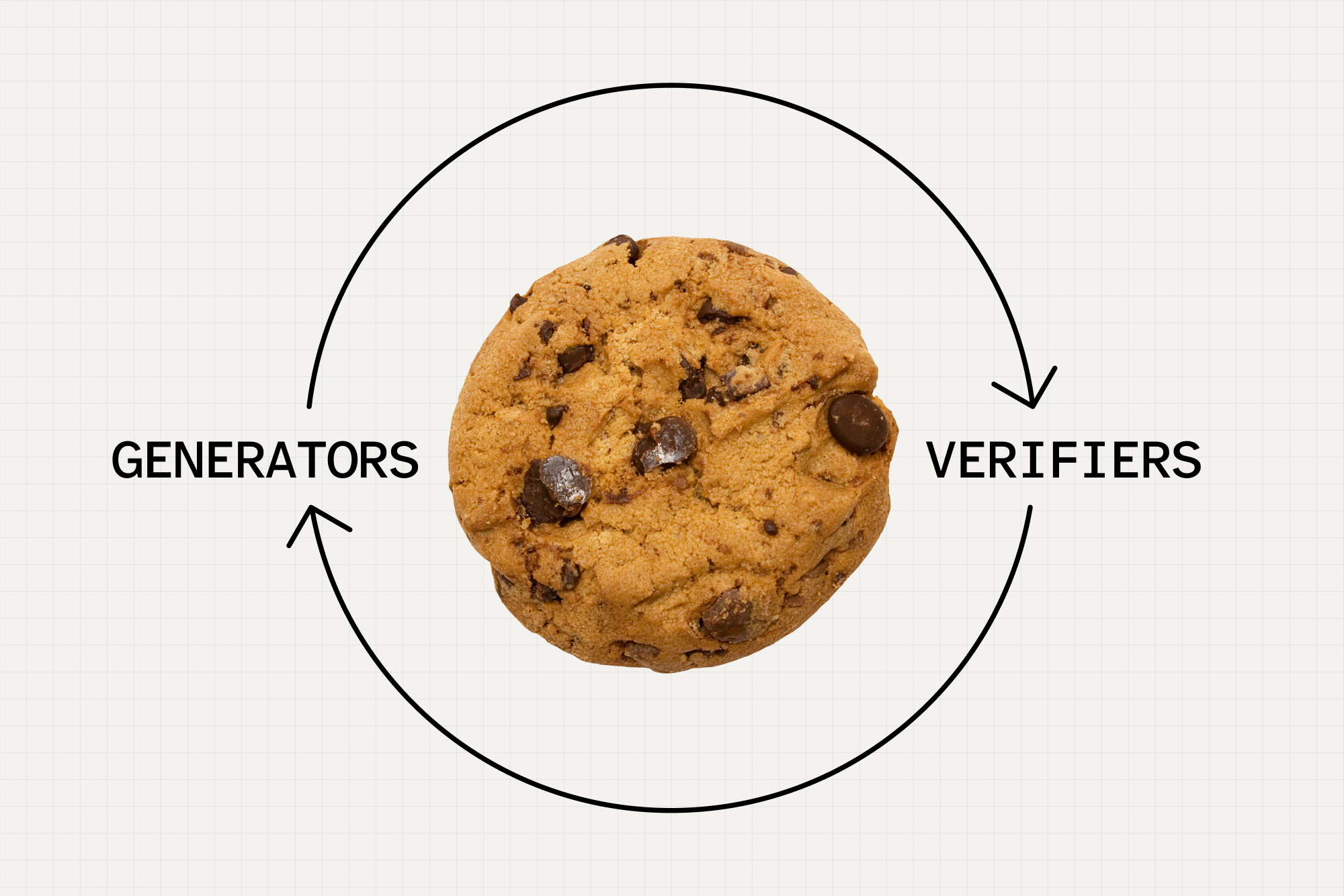

There’s a pattern I keep seeing in software development, and I think it points to where we are headed in our AI future. I want to describe it and see what other people think. I call it the “good loop.” The good loop is actually kind of anti-AI in some ways, in that it doesn’t see AI as the solution; it sees it more as a useful provocation. It’s a subtle framing, so it’s going to require a big, long thought experiment involving cookies.

Part 2: Enter the Test Kitchen

We all know that ChatGPT can produce recipes, except it has a tendency to get little things wrong—sugar, pepper, time in the oven. There have been a bunch of videos of people cooking ChatGPT recipes and being both delighted and disappointed. As with all things AI, you’re on your own: Prompt it incorrectly and it might tell you that it’s fine to replace chicken with peach jam. For the AI recipe video-making people, that’s often part of the fun.

But imagine, for a moment, if ChatGPT had a test kitchen. If it can work for Bon Appétit, it can work for OpenAI. You might wire ChatGPT up to various kitchen things: A fridge, an oven, a meat thermometer, a camera, maybe even a chemical analyzer like the ThermoFisher Scientific Gallery Aqua Master Discrete Analyzer, which I just learned about and now desperately want. Don’t worry about how things get from the fridge, mixed, and into the oven. Just imagine a bunch of robotic Keebler elves.

Now a simple loop emerges: ChatGPT generates a recipe, and the test kitchen verifies the recipe. The report comes back: “Too much salt, try again.” ChatGPT rewrites the recipe with less salt. The test kitchen cooks it: “Not sweet enough.” The next recipe adds more sugar. And so on. After enough loops, the report comes back: “This cookie is 89% tasty.” It’s a good recipe!

You have to watch out, though. The test kitchen only tests what it’s told to test. It might miss that the cookie is filled with arsenic and old bits of tin because it’s not testing for those things. Maybe the robo-elves like the taste of tin so they give it a thumbs up. But that’s less an error in ChatGPT, which is, after all, merely a generator of recipe-shaped things, and more of an error in your own testing framework—which should have only allowed you to prepare food with known, safe ingredients. You should have instructed your elves more wisely, my friend, because if you’ve worked in web technology as long as I have, you know that cookies are often insecure.

Notice what I’m not saying. I’m emphatically not saying the LLM can cook. I’m also not saying, “Trust the LLM.” The LLM can’t taste or chew. It cannot, sadly, experience crunch, at least not until AGI. But it can be a valuable part of a cookie improvement loop. Generate a recipe, verify the recipe and report findings, feed that back to the generator, repeat. Is this the best way to make cookies? Probably not. But if you have a big test kitchen and an abundance of elves, it might be the fastest way to test thousands of cookie recipes.

The loop can repeat until you run out of tokens, or you hit 100% tastiness, or the robot elves unionize. The loop can be understood between things that generate information—like LLMs, but you might also just upload a PDF—and things that verify and refine information before feeding their results back into an LLM. If a loop like this produces better cookies, it’s a “good loop.” If it produces worse cookies, it’s a “bad loop.”

This is in no way a discovery; I’m just trying to describe something I see so we can talk about it. “Agents” are basically good loops, when they don’t erase all your email. It’s also how vibe coding works. You ask the LLM to make code; the LLM spews out a bunch of extremely code-like stuff that may or may not work; it runs the code; if it breaks, it feeds the bug report back to the LLM. And so forth. With vibe coding, your laptop is the test kitchen. Claude can’t “code” any more than any LLM can “cook,” but it doesn’t matter to your computer—and the results are often delicious (i.e., they work).

Vibe coding is a natural good loop because the verifier is almost built in: “Write code on my laptop and run it.” That doesn’t require any elves at all. Now Anthropic is coming for finance in the same way—which makes sense, because finance is often about finding ways to verify sets of numbers. (It’s funny that they are selling people on their ability to export PowerPoints. I vibecoded a client-side PowerPoint generator that makes H.P. Lovecraft-style pitch decks in about five minutes last night, just to be annoying.)

I think there are lots of other good loops out there, too, and they’re fun to explore.

Part 3: Vibe Management Consulting

Have you ever wondered what companies like McKinsey, Bain, or Boston Consulting Group really do? There are lots of grim, ironic answers to this question. But here’s a very simplified version: When you hire them, relatively smart people (and their junior associates) analyze your business and industry. They turn what they learn into a “model.” A model is basically a spreadsheet in a cardigan, smoking a pipe. It lets consultants say things like: When more people buy our product, we make more money. But they’re not saying it, “math” is saying it. CEOs love that. It lets them feel less responsible.

A model is very simple software with tons of variables, and it lets you “test” assumptions. There are lots of ways to build models—you can define them in terms of moats, or use a business model canvas, and run Monte Carlo simulations, or use systems dynamics (SD) modeling tools (that’s my favorite, it’s very 70s).

Remember that client pitch at the beginning and my “Atlas” system? It’s basically a consulting firm simulator. Here’s how it works:

- It gathers lots of news and documents about the client’s industry and business;

- It uses AI to turn that into a database of facts called a “knowledge graph”;

- It queries and formats rigidly-structured, cited data from that knowledge graph;

- It uses that data to code a working business model in a language called Vensim;

- It recalibrates itself based on what it learns over time.

So that’s neat. The upshot is I can sit in front of my consulting bot and pitch it products and ask, “Will this work at all?” It can’t simply answer. It has to consult a model, search recent news, and prove that the product will make or save money. It has helped me kill many ideas, via math. It also helped me understand how to save a potential client $400,000 a year. That’s the good loop working.

Ultimately, instead of believing myself smart because a robot tells me so, I can have a robot compute all the ways I am stupid. This is better for me and for our clients. It helps me think like a management consultant instead of being a mere software person. But then, unlike a management consultant, our firm can actually build, make, and do things. Have you ever asked a management consultant to build anything? They sweat right through their Brioni suits.

So all right, hooray, I’ve got good loops for: (1) code and (2) business models. Claude’s building loops for legal services and finance and can make business models, too. Great. What else can be verified in the good loop?

The amazing news is that the entirety of scientific culture over the last several thousand years has been oriented around trying to create models that can describe the world and help us verify things with incomplete data. We’ve got business models, sure, as well as economic models and climate models. We have simulation frameworks, constraint optimization, and countless methods of analysis. In terms of quantities of useful statistical and mathematical methods, I would say we are safely at “galore.” Especially when you add in machine learning tools around genomics, materials science, astronomy, healthcare, epidemiology, and on and on.

A huge part of open-source code—the big programming libraries for Python, R, and Julia, and the scientific libraries for Fortran and C/C++—consists of exactly this kind of software. The problem was that it was always really hard to use, and you needed a graduate degree just to understand the Greek letters. But now it’s easy to get whole working systems up and running, and you can learn all about it as you go. If you’re confused, the system can explain it to you, over and over and over.

It’s not all happiness, of course. Over the past few months, I’ve created bugs so terrible while experimenting with this stuff that I wake up in fear for the future. At one point, an LLM was running a model and feeding the output back into its own database so that it was essentially creating its own reality of completely invented numerical facts. Once, Google Gemini started to hallucinate URLs because I forgot to say some magic words to it, then Claude hallucinated what the URLs contained, and turned that into “facts.” In about five minutes, the carefully crafted world I had created was destroyed by multiple LLMs running unsupervised. Another time, I woke up to find that my system had created 180 prototype products for, among other things, fisheries. It all feels pretty radioactive right now—bad loops! But if I add more verifiers to the system, they do go away. It’s just software, after all.

Does all this mean you should download genomics data sets and buy a CRISPR machine and breed your own super-ants to conquer the world? No. You should work with experts when you want to breed super-ants. What if they’re the stinging kind and you get stung to death? You have to be careful.

What I’m saying is, management consulting will be just fine. We will still need lots of experts. But lots more people can roleplay as experts, and while I know it’s de rigueur to assume that humans are slackjawed doofuses in red riding hoods who are all easily deceived by an AI dressed up like grandma, we actually have a little more agency than that, right? Despite all the evidence, I still believe we can learn things, and that we have real, actual value, even if we’re made of meat.

In some ways, I’m less curious about what it means when everyone can code, and more curious to see what it means when every small or medium org has easy access to statistical methods, geographic information systems, and business modeling. The small press cooperative can predict tote bag demand. The food bank can model out carrot distribution. The library system can cluster patron interests by branch and discover the most relevant books to acquire. All without anything, or anyone, in the middle charging them for this information.

Risks of super-ants aside, I like this approach enough that I want to will it into existence. So I’ll make a big, weird prediction: Software development is not going away, and there will be tons of software people in the future. However, software development in the future will be less and less about coding and deploying software. The machines are going to take that over. Instead, it’ll be more and more about cataloging and identifying good loops between generative systems and verification systems, then implementing and testing those loops. The outputs of these systems will be pretty familiar: Dashboards, PowerPoints, documents, charts. Everything will look surprisingly recognizable in the future, albeit chattier. There will just be much more of it, and under the hood, there will be a lot more statistical methods.

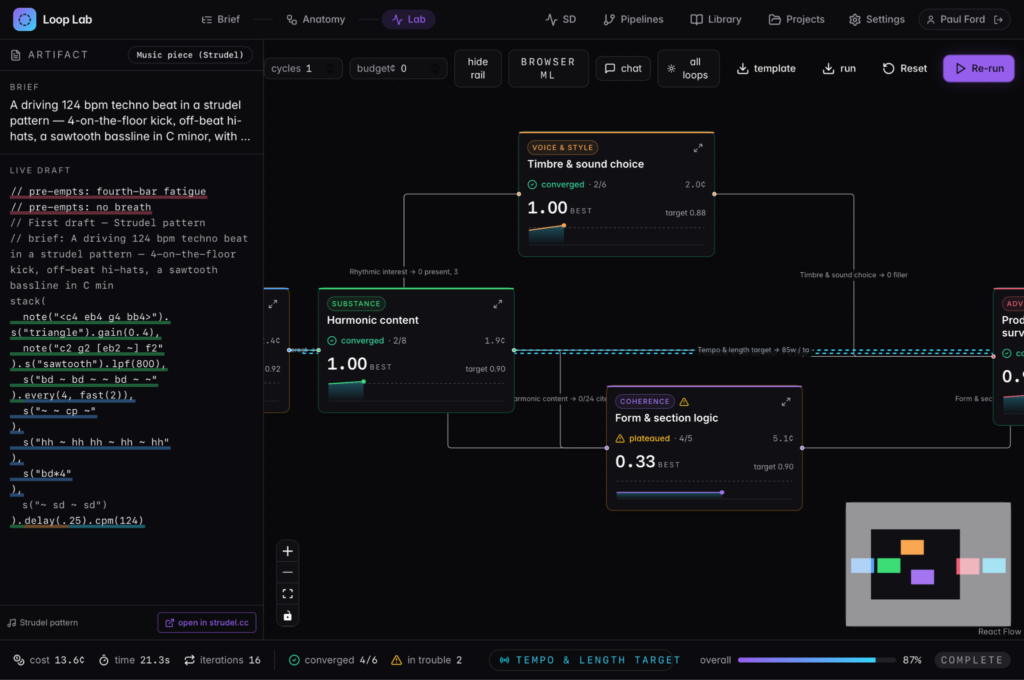

Part of Atlas is a section I call “Loop Lab.” It looks like this:

I built that in a weekend, mostly while sleeping. (That’s not a brag; you can do stuff like this too.) Loop Lab helps me research and design good loops. For example, in the screenshot it’s writing loops in strudel, a program that lets you code musical loops (you can hear the output if you click). I know this is incredibly extra. It really is a wild time.

Now for the big question from the beginning of this essay: Will Anthropic or OpenAI get there first and make all the good loops ahead of time? And thus conquer finance, healthcare, law, and everything else?

Maybe. I doubt it. Claude’s new finance tools look good but they aren’t quite right for what I do all day. They might, however, be a really good backstop and verification layer. But the larger point is value isn’t really inside software; it’s in what you do with it across relationships. No matter how rich and handsome you are, you can’t marry into every family. I think the huge AI companies will build a whole bunch of tools to gain market share in big industries and get lots of customers, but it will also be much harder than they hope to really conquer the enterprise market. The enterprise market is filled with fleshy, annoying humans who need everything to be a certain way, and are exhausting and recalcitrant and needy. The middle market is even worse—it’s filled with fleshy humans with less money.

But I actually hope the big labs build things like Loop Lab—except, you know, way better. Tools to build tools. I don’t expect they will, though, because “let’s replace junior analysts at Citigroup” is lower-hanging fruit. But that’s okay, because I can build Loop Lab myself.

I know that many people might find me too cheerful about all of this. I often regret my natural enthusiasm. I also tend to think things will happen very quickly, and I’m usually wrong there, too. Nonetheless, I believe the good loops are coming, and many are already here.

Part 4: A Step at a Time

None of that obviates that this is too much change at once. Not only are we being blasted with AI news all day (I’m part of the problem, especially if you see my LinkedIn), but the new LLMs can hack into everything, which means we need to work to make stuff more efficient and secure—Claude Mythos uncovered 271 serious vulnerabilities in Firefox, for example, one for each remaining Firefox user. (Don’t email me, I love and use Firefox.) More of that kind of stuff is coming.

So despite all of my visions of exciting loops and statistical thrills, it’s also true that we need to clean up our room before we get to play with our new toys. Everyone wants to AI everything, but 95% of AI projects are frankly not going that well, even as 5% are going almost mindbogglingly well. We have to find ways to level that out and distribute the advantages. It can’t just be me sitting in the dark getting one last prompt in before bedtime. That’s a reason Aboard is an AI consulting firm.

That said, once we’ve all got things nice and tidy, and cleaned up all our tech debt (or rather swept it all under the bed in an efficient manner) I do think there will be a whole cool world—a more accessible, more interesting, and more connected world—to explore, of loops inside loops inside loops. And I think it will be a lot weirder than anyone expects, because all the wizardry of the last millennia of science and industry will slowly become available to way more people. Small businesses will be able to experiment with pricing strategies like Stanford professors. Our world could be filled with S curves, J curves, and lots of other curves besides. Eventually, AI will stop being front and center and we will be able to discuss anything else. May God bring that day soon. I like humans, despite our recent setbacks, and I’m still holding out hope we do well with our new superpowers.

That’s my optimistic read on what’s possible. You might disagree, and that’s good. You’ve been right before. In the meantime, back to cleaning up the room. We can sing a little song while we do it.